Google wants its users to “Google” what they see via their phone’s camera.

At the search engine’s annual conference, Google I/O, the company revealed its “Google Lens,” which allows smartphones to process what’s going in a photo or video. It combines buzzwords like artificial intelligence, computer vision, and visual search into one user-friendly function.

At the risk of hyperbole, the technological breakthrough could be revolutionary.

To put the power of Google Lens, a user can point the camera at a flower and they’ll be able to find out what kind it is. Or if they aim it at a restaurant, they’ll see reviews, the menu, hours of operation, and whether or not there’s an available reservation for a table.

With Google Lens, your smartphone camera won’t just see what you see, but will also understand what you see to help you take action. #io17 pic.twitter.com/viOmWFjqk1

— Google (@Google) May 17, 2017

This function is in its early stages, so how people will use in five years could expand far beyond what was demoed at Google I/O. The fact that”Lens” also lets people connect to wifi connections simply point the camera at the router’s setting sticker is a perfect example of the more conspicuous applications to come.

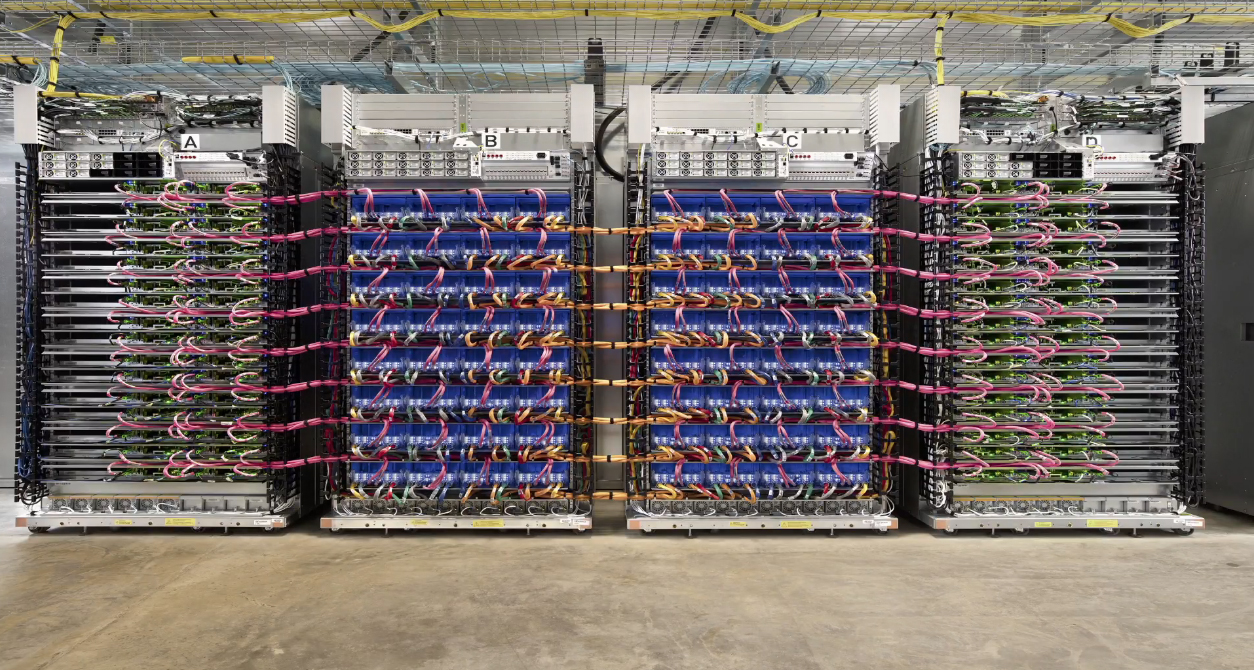

“Lens” is part of Google’s greater pivot to an AI-focused future that was on full display at I/O Wednesday. CEO Sundar Pichai also revealed an artificial intelligence chip, offered as a standalone product and through a cloud-based service, that gives exclusive access to its AI computing. In doing so, Google is basically removing the velvet rope from its machine learning systems that have allowed it to make rapid advances in image recognition, automated translation, and robotics.

Google Home, its personal AI and primary competitor to Amazon Echo, will be able to act as a speakerphone, calling any phone just by voice command.

Google Home bolstered its media offerings too. The Home is now compatible with Spotify (only streamed music from Google Play previously) and can now interact with TVs via Chromecast.

Also unveiled at I/O, Google AI is coming to iOS. Previously the smartphone assistant was available just on Android phones.

This article was featured in the InsideHook newsletter. Sign up now.