The coming ubiquity of “deep fakes” is terrifying, but that’s nothing compared to what might follow.

Deep fakes (sometimes written as “deepfakes”), for the uninitiated, refer to using video editing technology to graft very real faces on other people’s bodies to make them appear to do or say anything the programmer wants. Some demonstration videos of the technology are chilling.

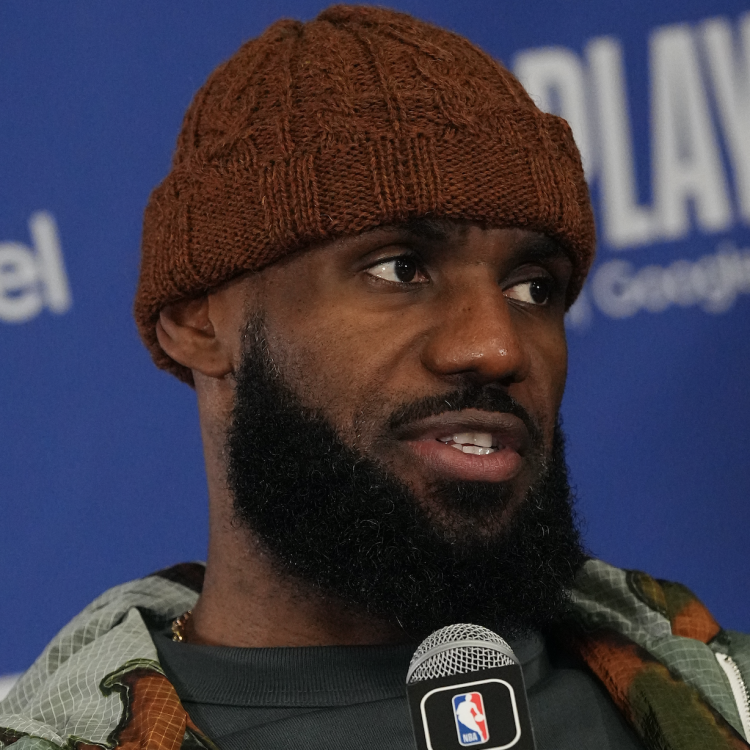

(AP Photo)And while it’s primarily been used so far for pasting celebrity faces into porn, experts warn that there will soon come a day when a deep fake video is “leaked” online showing a powerful person — a nation’s leader, for instance — saying or doing something incredibly politically or personally compromising. It will be so convincing that an unaided observer won’t be able to tell it’s not real.

Bobby Chesney, the James Baker Chair and Associate Dean for Academic Affairs at the University of Texas School of Law, recently imagined scenarios ranging from the appearance of a “hard to debunk” audio recording from the closed door Trump-Putin one-on-one in Helsinki in which the president, say, appears to abandon some allies. Or maybe it’ll be a local law enforcement figure “caught” saying racist things in a community already rife with racial tension.

Chris Bregler, another expert on the panel and a senior scientist at Google, told the audience that currently deep fakes can’t trick sophisticated verification tools and would eventually be debunked. But with today’s lightning fast social media environment, the old adage (fittingly erroneously) credited to Mark Twain is truer than ever: “A lie can travel halfway around the world while the truth is still putting on its shoes.” The damage would already be done.

The key to combating deep fakes, the experts said, is in raising awareness that this technology is out there so people pause before buying into especially scandalous footage until it’s been vetted. But there’s a side effect to that kind of universal suspicion that could cause more lasting damage than the fake videos themselves — the ultimate loss in the war of attrition for people’s trust.

“The more successful we are at getting people to be aware of the nature of this problem and to cultivate their skepticism about audio and video, the more space for creating for something in the paper we call the ‘liar’s dividend,’” Chesney said. “There’s the mirror image situation where the video is real, the audio is real, it does expose something wrong or embarrassing about that public figure, and that person has the shamelessness to deny it. This already happens. But how much more room is there to persuasively deny video and audio evidence when people have been pounded with the message — by us — ‘beware of deep fakes,’ ‘imagery can be manipulated,’ ‘video can be manipulated.’ So the cry of ‘fake news’ becomes the cry of ‘deep fake news.’ It is much more resonant because its success in getting people to be on guard.”

The “nightmare scenario,” as the University of Maryland Carey School of Law’s Danielle Citron put it, is a world in which the public feels that “nothing is believable” and “as we withdraw into our filter bubbles. . . there are no truths.”

In today’s political environment there could hardly be a worse time for that kind of pervasive doubt. Earlier this week President Donald Trump lambasted the “fake news” media and bluntly told his supporters, “Just remember, what you’re seeing and what you’re reading is not what’s happening.”

In the near future, people might have good reason to agree.

This article was featured in the InsideHook newsletter. Sign up now.